MLflow is the largest open source AI engineering platform. MLflow enables teams of all sizes to debug, evaluate, monitor, and optimize production-quality AI agents, LLM applications, and ML models while controlling costs and managing access to models and data. With over 30 million monthly downloads, thousands of organizations rely on MLflow each day to ship AI to production with confidence.

MLflow's comprehensive feature set for agents and LLM applications includes production-grade observability, evaluation, prompt management, an AI Gateway for managing costs and model access, and more. Learn more at MLflow for LLMs and Agents.

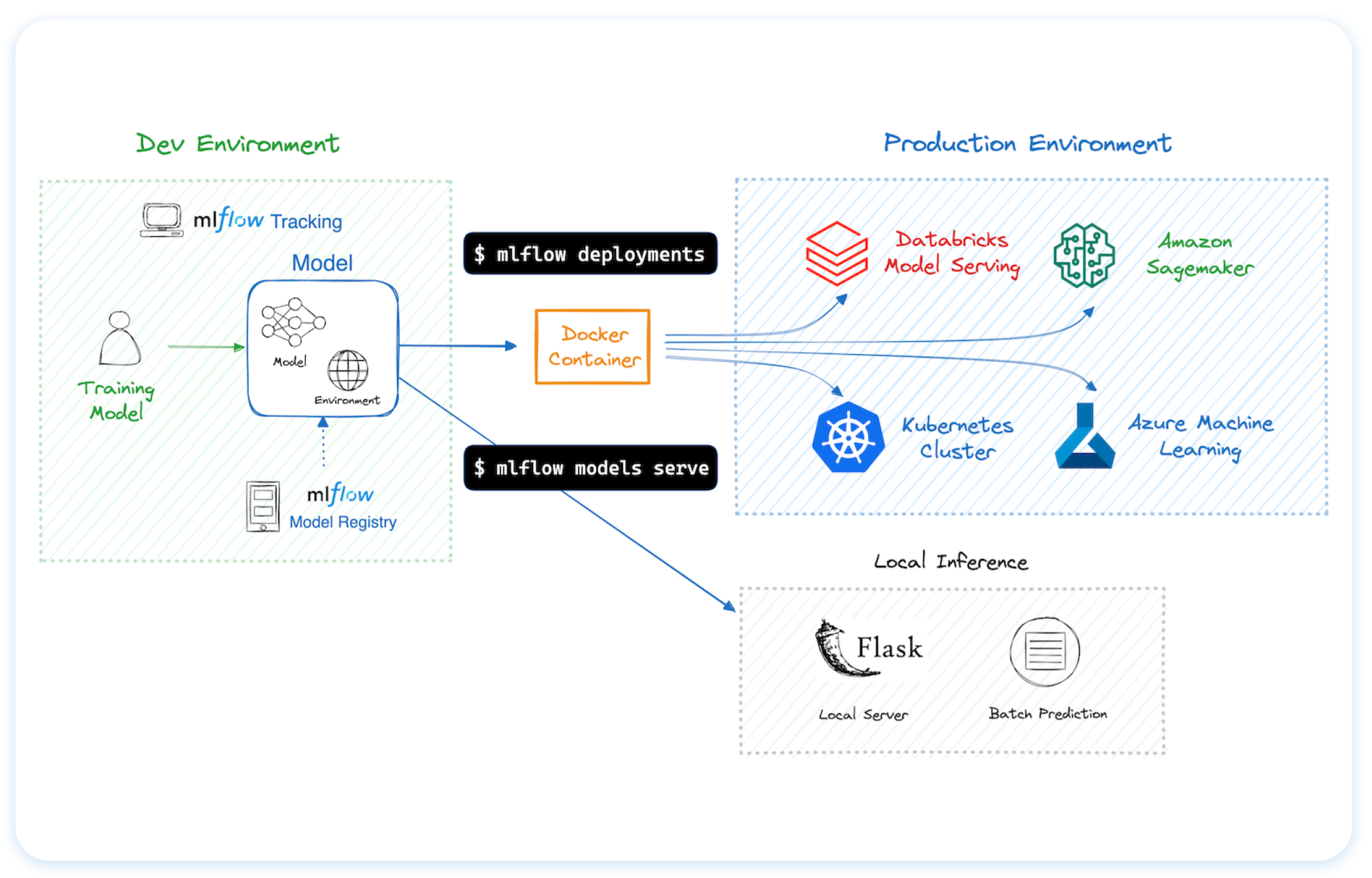

For machine learning (ML) model development, MLflow provides experiment tracking, model evaluation capabilities, a production model registry, and model deployment tools.

To install the MLflow Python package, run the following command:

pip install mlflow

MLflow is the only platform that provides a unified solution for all your AI/ML needs, including LLMs, Agents, Deep Learning, and traditional machine learning.

🔍 Tracing / Observability Trace the internal states of your LLM/agentic applications for debugging quality issues and monitoring performance with ease. Getting Started → |

📊 LLM Evaluation A suite of automated model evaluation tools, seamlessly integrated with experiment tracking to compare across multiple versions. Getting Started → |

🤖 Prompt Management Version, track, and reuse prompts across your organization, helping maintain consistency and improve collaboration in prompt development. Automatically optimize prompts using data-driven algorithms instead of manual trial-and-error. Getting Started → |

🌐 AI Gateway Route requests to any LLM provider through a secure proxy, with built-in credential management, cost tracking, guardrails, traffic splitting for A/B testing, automatic failover, and beyond. Getting Started → |

📝 Experiment Tracking Track your models, parameters, metrics, and evaluation results in ML experiments and compare them using an interactive UI. Getting Started → |

|

💾 Model Registry A centralized model store designed to collaboratively manage the full lifecycle and deployment of machine learning models. Getting Started → |

🚀 Deployment Tools for seamless model deployment to batch and real-time scoring on platforms like Docker, Kubernetes, Azure ML, and AWS SageMaker. Getting Started → |

You can run MLflow in many different environments, including local machines, on-premise servers, and cloud infrastructure.

Trusted by thousands of organizations, MLflow is now offered as a managed service by most major cloud providers:

For hosting MLflow on your own infrastructure, please refer to this guidance.

MLflow is natively integrated with popular AI agent frameworks and machine learning libraries. MLflow supports all LLM providers, AI tools and programming languages.

Tracing (Observability) (Doc)

MLflow Tracing provides observability for various AI agent and LLM application libraries such as OpenAI, LangChain, LlamaIndex, DSPy, AutoGen, and more. To enable auto-tracing, call mlflow.xyz.autolog() before running your models. Refer to the documentation for customization and manual instrumentation.

import mlflow

from openai import OpenAI

# Enable tracing for OpenAI

mlflow.openai.autolog()

# Query OpenAI LLM normally

response = OpenAI().chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": "Hi!"}],

temperature=0.1,

)Then navigate to the "Traces" tab in the MLflow UI to find the trace records for the OpenAI query.

Evaluating LLMs, Prompts, and Agents (Doc)

The following example runs automatic evaluation for question-answering tasks with several built-in metrics.

import os

import openai

import mlflow

from mlflow.genai.scorers import Correctness, Guidelines

client = openai.OpenAI(api_key=os.getenv("OPENAI_API_KEY"))

# 1. Define a simple QA dataset

dataset = [

{

"inputs": {"question": "Can MLflow manage prompts?"},

"expectations": {"expected_response": "Yes!"},

},

{

"inputs": {"question": "Can MLflow create a taco for my lunch?"},

"expectations": {"expected_response": "No, unfortunately, MLflow is not a taco maker."},

},

]

# 2. Define a prediction function to generate responses

def predict_fn(question: str) -> str:

response = client.chat.completions.create(

model="gpt-4o-mini", messages=[{"role": "user", "content": question}]

)

return response.choices[0].message.content

# 3. Run the evaluation

results = mlflow.genai.evaluate(

data=dataset,

predict_fn=predict_fn,

scorers=[

# Built-in LLM judge

Correctness(),

# Custom criteria using LLM judge

Guidelines(name="is_english", guidelines="The answer must be in English"),

],

)Navigate to the "Evaluations" tab in the MLflow UI to find the evaluation results.

Tracking Model Training (Doc)

The following example trains a simple regression model with scikit-learn, while enabling MLflow's autologging feature for experiment tracking.

import mlflow

from sklearn.model_selection import train_test_split

from sklearn.datasets import load_diabetes

from sklearn.ensemble import RandomForestRegressor

# Enable MLflow's automatic experiment tracking for scikit-learn

mlflow.sklearn.autolog()

# Load the training dataset

db = load_diabetes()

X_train, X_test, y_train, y_test = train_test_split(db.data, db.target)

rf = RandomForestRegressor(n_estimators=100, max_depth=6, max_features=3)

# MLflow triggers logging automatically upon model fitting

rf.fit(X_train, y_train)Once the above code finishes, run the following command in a separate terminal and access the MLflow UI via the printed URL. An MLflow Run should be automatically created, which tracks the training dataset, hyperparameters, performance metrics, the trained model, dependencies, and even more.

mlflow server

- For help or questions about MLflow usage (e.g. "how do I do X?") visit the documentation.

- In the documentation, you can ask the question to our AI-powered chat bot. Click on the "Ask AI" button at the right bottom.

- Join the virtual events like office hours and meetups.

- To report a bug, file a documentation issue, or submit a feature request, please open a GitHub issue.

- For release announcements and other discussions, please subscribe to our mailing list (mlflow-users@googlegroups.com) or join us on Slack.

We happily welcome contributions to MLflow!

- Submit bug reports and feature requests

- Contribute for good-first-issues and help-wanted

- Writing about MLflow and sharing your experience

Please see our contribution guide to learn more about contributing to MLflow.

If you use MLflow in your research, please cite it using the "Cite this repository" button at the top of the GitHub repository page, which will provide you with citation formats including APA and BibTeX.

MLflow is currently maintained by the following core members with significant contributions from hundreds of exceptionally talented community members.